Medical research underpins every treatment decision, clinical guideline, and health policy. But the phrase “evidence-based” is often used without a clear understanding of what counts as strong evidence, what constitutes weak evidence, and how both should be interpreted in practice. This becomes particularly important in the context of rare diseases, where high-quality evidence is often limited by small patient populations, heterogeneous presentations, and evolving scientific understanding.

This article explores the concept of evidence hierarchy, explains the difference between strong and weak evidence, and addresses a critical but often overlooked issue: expectation control. Understanding not just what the evidence says, but how reliable it is, allows patients, clinicians, and policymakers to make more informed and realistic decisions.

In medicine, evidence refers to systematically collected data used to answer a specific clinical or scientific question. This might include whether a treatment works, whether a diagnostic test is accurate, or whether a risk factor is associated with disease.

Evidence is not simply “information” or “opinion.” It is generated through structured methodologies designed to minimise bias, reduce uncertainty, and allow replication. However, not all evidence is created equal. The strength of evidence depends on how it was generated, how rigorously it was analysed, and how consistently it can be reproduced.

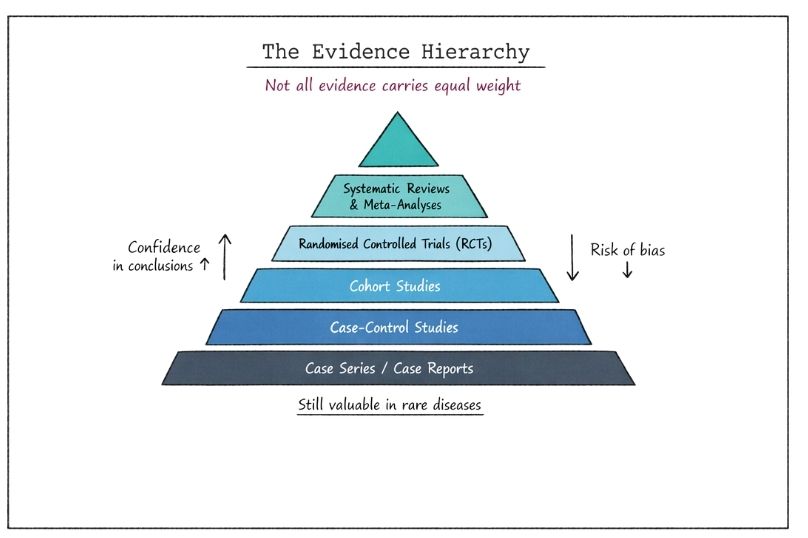

To assess the reliability of evidence, researchers use an evidence hierarchy. This is a conceptual ranking system that orders study designs based on their ability to produce valid and unbiased results.

At the top of the hierarchy are methods that minimise bias and allow causal inference. At the bottom are approaches that are more vulnerable to bias and confounding factors.

These sit at the top of the hierarchy. A systematic review identifies, appraises, and synthesises all relevant studies addressing a specific question. A meta-analysis goes further by statistically combining results from multiple studies.

Strengths:

Limitations:

RCTs are considered the gold standard for evaluating interventions. Participants are randomly assigned to treatment or control groups, reducing selection bias.

Strengths:

Limitations:

These observational studies follow groups of individuals over time to assess outcomes based on exposure to a factor or intervention.

Strengths:

Limitations:

These studies compare individuals with a condition (cases) to those without (controls) to identify potential risk factors.

Strengths:

Limitations:

These describe individual or small groups of patients, often highlighting novel or unusual findings.

Strengths:

Limitations:

At the base of the hierarchy lies expert opinion, often informed by clinical experience rather than structured data.

Strengths:

Limitations:

Strong evidence is characterised by methodological rigour, reproducibility, and consistency across multiple studies. It typically comes from well-designed RCTs and systematic reviews.

Key features of strong evidence include:

Strong evidence allows for confident conclusions about causality and effectiveness. It forms the basis of clinical guidelines and regulatory decisions.

However, strong evidence is not synonymous with certainty. Even high-quality studies have limitations, and conclusions may evolve as new data emerge.

Weak evidence arises from study designs that are more susceptible to bias, have smaller sample sizes, or lack rigorous controls.

Common characteristics include:

Weak evidence does not mean incorrect or useless. It often represents early-stage knowledge, particularly in emerging fields or rare diseases. However, it requires cautious interpretation and should not be overgeneralised.

Rare diseases expose the limitations of traditional evidence hierarchies. Conducting large-scale RCTs may be impractical or impossible due to small patient populations. As a result, clinicians and researchers often rely on lower levels of evidence.

This creates a paradox:

In this context, case series, registries, and real-world data become critically important. While these may rank lower in the hierarchy, they can provide meaningful insights when interpreted appropriately.

Regulatory bodies increasingly recognise this challenge and may accept alternative forms of evidence for rare disease treatments, including adaptive trial designs and surrogate endpoints.

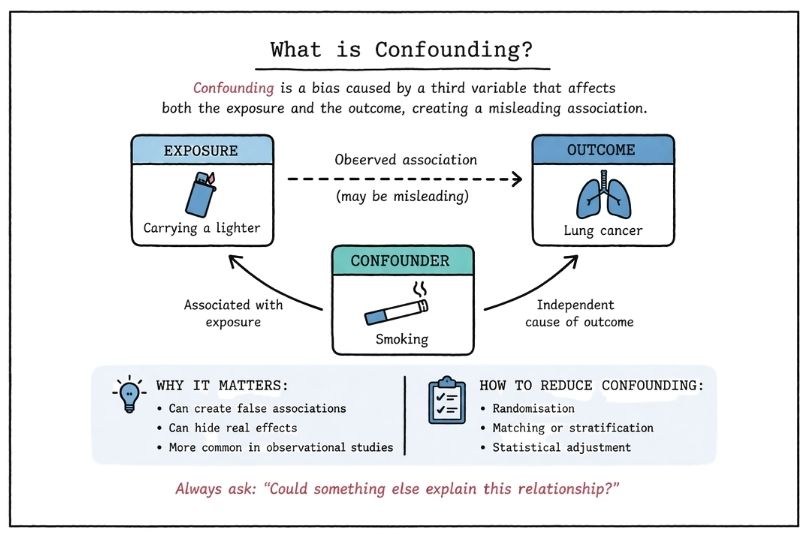

Bias is a systematic error that can distort study findings. Understanding bias is central to evaluating evidence strength.

Common types include:

Strong study designs aim to minimise these biases, but they can never be eliminated entirely. Critical appraisal requires identifying potential sources of bias and assessing their impact.

A common misunderstanding is equating statistical significance with meaningful impact.

A treatment may produce a statistically significant improvement that is too small to matter clinically. Conversely, a clinically meaningful effect may not reach statistical significance in small studies.

Both dimensions must be considered when interpreting evidence.

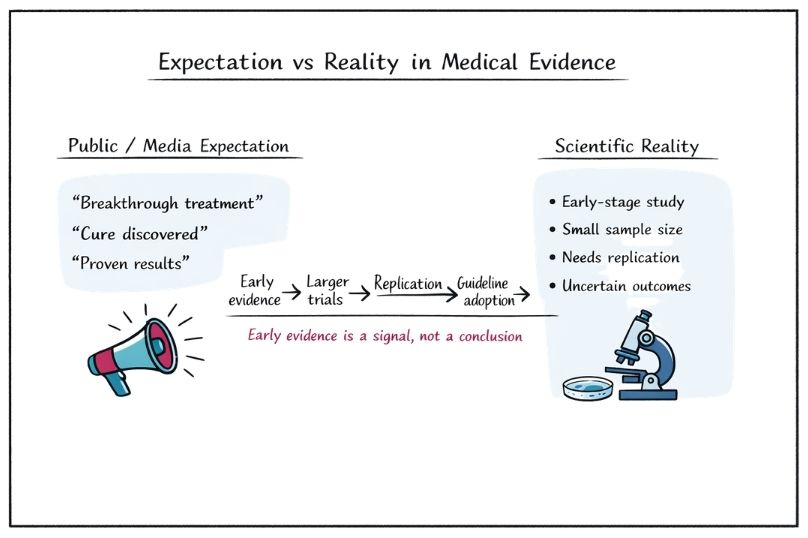

Expectation control refers to aligning interpretations of evidence with its actual strength and limitations. It is essential for preventing overconfidence, misinterpretation, and inappropriate decision-making.

In the absence of strong data, there is a tendency to overinterpret early findings. This is particularly common in:

This can lead to premature adoption of interventions that are later shown to be ineffective or harmful.

Even strong evidence has uncertainty. Confidence intervals, variability across populations, and evolving data all contribute to this.

Failure to acknowledge uncertainty can result in:

Media reporting often simplifies or exaggerates findings, presenting weak evidence as definitive. Headlines may imply causation where only association exists.

For example:

This distorts public understanding and creates unrealistic expectations.

Effective communication of evidence is a critical skill. It involves translating complex data into clear, accurate, and contextually appropriate information.

Key principles include:

In rare diseases, this often involves discussing uncertainty openly and collaboratively exploring options.

Real-world evidence refers to data collected outside controlled clinical trials, such as patient registries, electronic health records, and observational studies.

In rare diseases, real-world evidence plays a crucial role by:

While real-world evidence is generally considered lower in the hierarchy, advances in data analytics and study design are improving its reliability and utility.

To address limitations in traditional evidence generation, researchers are developing innovative trial designs, including:

These approaches are particularly valuable in rare diseases, where flexibility and efficiency are essential.

Regulatory agencies must balance the need for rigorous evidence with the urgency of unmet medical need.

In rare diseases, this often leads to:

This reflects a pragmatic approach: accepting some uncertainty in exchange for earlier access to potentially beneficial treatments.

One of the most problematic misconceptions is viewing evidence as either strong or weak in absolute terms.

In reality, evidence exists on a spectrum. A single study may be strong in design but limited in scope. Multiple weak studies may collectively provide meaningful insights.

Critical evaluation requires nuance, not binary categorisation.

Improving understanding of evidence hierarchy and strength is essential for:

Key components of evidence literacy include:

This is particularly important in rare diseases, where decisions often rely on incomplete information.

Understanding what constitutes strong and weak evidence is not an academic exercise. It is a practical necessity for making informed decisions in healthcare.

Strong evidence provides confidence, but not certainty. Weak evidence offers direction, but not definitive answers. Both have a role, particularly in areas where data are limited.

The key is not simply to ask, what does the evidence say, but to ask:

By combining an understanding of evidence hierarchy with disciplined expectation control, it becomes possible to navigate complexity without oversimplification. This is especially critical in rare diseases, where the stakes are high and the evidence is often incomplete.

A structured, nuanced approach to evidence interpretation supports better decisions, more realistic expectations, and ultimately, more effective and responsible healthcare.